Chapter 4

Making Predictions

Correlation

Measuring the relation between two variables¶

In the last chapter, we began developing a method that would allow us to estimate the birth weight of babies based on the number of gestational days. In this chapter, we develop a more general approach to measuring the relation between two variables and estimating the value of one variable given the value of another.

Our proposed estimate in the example of birth weights was simple:

- Find the ratio of the birth weight to gestational days for each baby in the sample.

- Find the median of the ratios.

- For a new baby, multiply the number of gestational days by that median. This is our estimate of the baby's birth weight.

Here are the data; the column r_bwt_gd contains the ratio of birth weight to gestational days.

baby = Table.read_table('baby.csv')

baby['r_bwt_gd'] = baby['birthwt']/baby['gest_days']

baby

The median of the ratios is just about 0.429 ounces per day:

np.median(baby['r_bwt_gd'])

So we can construct our proposed estimate by multiplying the number of gestational days of each baby by 0.429. The column est_wt contains these estimates.

baby['est_bwt'] = 0.429*baby['gest_days']

baby.drop('r_bwt_gd')

Because of the way they are constructed – by multiplying the gestational days by a constant – The estimates all lie on a straight line.

bb0 = baby.select(['gest_days', 'est_bwt'])

bb0.scatter('gest_days')

plots.ylim(40,200)

plots.xlabel('gestational days')

plots.ylabel('estimated birth weight')

A natural question to ask is, "How good are these estimates?" To get a sense of the answer, let us visualize the data by drawing a scatter plot of birth weight versus gestational days. The scatter plot consists of one point for each of the 1,174 babies in the sample. The horizontal axis represents the number of gestational days and the vertical axis represents the birth weight.

The plot is generated by using a Table method called scatter. The argument is the label of the column that contains the values to be plotted on the horizontal axis. For each of the other columns in the table, a scatter diagram is produced with the corresponding variable on the vertical axis. To generate just one scatter plot, therefore, we start by selecting only the variables that we need.

gd_bwt = baby.select(['gest_days', 'birthwt'])

gd_bwt.scatter('gest_days')

plots.xlabel('gestational days')

plots.ylabel('birth weight')

How good are the estimates that we calculated? To get a sense of this, we will re-draw the scatter plot and overlay the line of estimates. This can be done by using the argument overlay=True when we call scatter.

gd_bwt_est = baby.select(['gest_days', 'birthwt', 'est_bwt'])

gd_bwt_est.scatter('gest_days', overlay=True)

plots.xlabel('gestational days')

The line appears to be roughly in the center of the scatter diagram; if we use the line for estimation, the amount of over-estimation will be comparable to the amount of under-estimation, roughly speaking. This is a natural criterion for determining a "good" straight line of estimates.

Let us see if we can formalize this idea and create a "best" straight line of estimates.

To see roughly where such a line must lie, we will start by attempting to estimate maternal pregnancy weight based on the mother's height. The heights have been measured to the nearest inch, resulting in the vertical stripes in the scatter plot.

ht_pw = baby.select(['mat_ht', 'mat_pw'])

plots.scatter(ht_pw['mat_ht'], ht_pw['mat_pw'], s=5, color='gold')

plots.xlabel('height (inches)')

plots.ylabel('pregnancy weight (pounds)')

Suppose we know that one of the women is 65 inches tall. What would be our estimate for her pregnancy weight?

We know that the point corresponding to this woman must be on the vertical strip at 65 inches. A natural estimate of her pregnancy weight is the average of the weights in that strip; the rough size of the error in this estimate will be the SD of the weights in the strip.

For a woman who is 60 inches tall, our estimate of pregnancy weight would be the average of the weights in the vertical strip at 60 inches. And so on.

Here are the average pregnancy weights for all the values of the heights.

v_means = ht_pw.group('mat_ht', np.mean)

v_means

In the figure below, these averages are overlaid on the scatter plot of pregnancy weight versus height, and appear as green dots at the averages of the vertical strips. The graph of green dots is called the graph of averages. A graph of averages shows the average of the variable on the vertical axis, for each value of the variable on the horizontal axis. It can be used for estimating the variable on the vertical axis, given the variable on the horizontal.

plots.scatter(baby['mat_ht'], baby['mat_pw'], s=5, color='gold')

plots.scatter(v_means['mat_ht'], v_means['mat_pw mean'], color='g')

plots.xlabel('height (inches)')

plots.ylabel('pregnancy weight (pounds)')

plots.title('Graph of Averages')

For these two variables, the graph of averages looks roughly linear: the green dots are fairly close to a straight line for much of the scatter. That straight line is the "best" straight line for estimating pregnancy weight based on height.

To identify the line more precisely, let us examine the oval or football shaped scatter plot.

First, we note that the position of the line is a property of the shape of the scatter, and is not affected by the units in which the heights and weights were measured. Therefore, we will measure both variables in standard units.

Identifying the line that goes through the graph of averages¶

x_demo = np.random.normal(0, 1, 10000)

z_demo = np.random.normal(0, 1, 10000)

y_demo = 0.5*x_demo + np.sqrt(.75)*z_demo

plots.scatter(x_demo, y_demo, s=10)

plots.xlim(-4, 4)

plots.ylim(-4, 4)

plots.axes().set_aspect('equal')

plots.plot([-4, 4], [-4*0.6,4*0.6], color='g', lw=1)

plots.plot([-4,4],[-4,4], lw=1, color='r')

plots.plot([1.5,1.5], [-4,4], lw=1, color='k')

plots.xlabel('x in standard units')

plots.ylabel('y in standard units')

Here is a football shaped scatter plot. We will follow the usual convention of calling the variable along the horizontal axis $x$ and the variable on the vertical axis $y$. Both variables are in standard units. This implies that the center of the football, corresponding to the point where both variables are at their average, is the origin (0, 0).

Because of the symmetry of the figure, resulting from both variables being measured in standard units, it is natural to see whether the 45 degree line is the best line for estimation. The 45 degree line has been drawn in red. For points on the red line, the value of $x$ in standard units is equal to the value of $y$ in standard units.

Suppose the given value of $x$ is at the average, in other words at 0 on the standard units scale. The points corresponding to that value of $x$ are on the vertical strip at $x$ equal to 0 standard units. The average of the values of $y$ in that strip can be seen to be at 0, by symmetry. So the straight line of estimates should pass through (0, 0).

A careful look at the vertical strips shows that the red line does not work for estimating $y$ based on other values of $x$. For example, suppose the value of $x$ is 1.5 standard units. The black vertical line corresponds to this value of $x$. The points on the scatter whose value of $x$ is 1.5 standard units are the blue dots on the black line; their values on the vertical scale range from about 2 to about 3. It is clear from the figure that the red line does not pass through the average of these points; the red line is too steep.

To get to the average of the vertical strip at $x$ equal to 1.5 standard units, we have to come down from the red line to the center of the strip. The green line is at that point; it has been drawn by connecting the center of the strip to the point (0, 0) and then extending the line on both sides.

The scatter plot is linear, so the green line picks off the centers of the vertical strips. It is the line that should be used to estimate $y$ based on $x$ when both variables are in standard units.

The slope of the red line is equal to 1. The green line is less steep, and so its slope is less than 1. Because it is sloping upwards in the figure, we have established that its slope is between 0 and 1.

Summary. When both variables are measured in standard units, the best line for estimating $y$ based on $x$ is less steep than the 45 degree line and thus has slope less than one. The discussion above was based on a scatter plot sloping upwards, and so the slope of the best line is a number between 0 and 1. For scatter diagrams sloping downwards, the slope would be a number between 0 and -1. For the slope to be close to -1 or 1, the red and green lines must be close, or in other words, the scatter diagram must be tightly clustered around a straight line.

The Correlation Coefficient¶

This number between -1 and 1 is called the correlation coefficient and is said to measure the correlation between the two variables.

- The correlation coefficient $r$ is a number between $-1$ and 1.

- When both the variables are measured in standard units, $r$ is the slope of the "best straight line" for estimating $y$ based on $x$.

- $r$ measures the extent to which the scatter plot clusters around a straight line of positive or negative slope.

The function football takes a value of $r$ as its argument and generates a football shaped scatter plot with correlation roughly $r$. The red line is the 45 degree line $y=x$, corresponding to points that are the same number of standard units in both variables. The green line, $y = rx$, is a smoothed version of the graph of averages. Points on the green line correspond to our estimates of the variable on the vertical axis, given values on the horizontal.

Call football a few times, with different values of $r$ as the argument, and see how the football changes. Positive $r$ corresponds to positive association: above-average values of one variable are associated with above-average values of the other, and the scatter plot slopes upwards.

Notice also that the bigger the absolute value of $r$, the more clustered the points are around the green line of averages, and the closer the green line is to the red line of equal standard units.

When $r=1$ the scatter plot is perfectly linear and slopes upward. When $r=-1$, the scatter plot is perfectly linear and slopes downward. When $r=0$, the scatter plot is a formless cloud around the horizontal axis, and the variables are said to be uncorrelated.

# Football shaped scatter, both axes in standard units

# Argument of function: correlation coefficient r

# red line: slope = 1 (or -1)

# green line: smoothed graph of averages, slope = r

# Use the green line for estimating y based on x

football(0.6)

football(0.2)

football(0)

football(-0.7)

Calculating $r$¶

The formula for $r$ is not apparent from our observations so far; it has a mathematical basis that is outside the scope of this class. However, the calculation is straightforward and helps us understand several of the properties of $r$.

Formula for $r$:

- $r$ is the average of the products of the two variables, when both variables are measured in standard units.

Here are the steps in the calculation. We will apply the steps to a simple table of values of $x$ and $y$.

t = Table([np.arange(1,7,1), [2, 3, 1, 5, 2, 7]], ['x','y'])

t

Based on the scatter plot, we expect that $r$ will be positive but not equal to 1.

plots.scatter(t['x'], t['y'], s=30, color='r')

plots.xlim(0, 8)

plots.ylim(0, 8)

plots.xlabel('x')

plots.ylabel('y', rotation=0)

Step 1. Convert each variable to standard units.

t['x_su'] = (t['x'] - np.mean(t['x']))/np.std(t['x'])

t['y_su'] = (t['y'] - np.mean(t['y']))/np.std(t['y'])

t

Step 2. Multiply each pair of standard units.

t['su_product'] = t['x_su']*t['y_su']

t

Step 3. $r$ is the average of the products computed in Step 2.

# r is the average of the products of standard units

r = np.mean(t['su_product'])

r

As expected, $r$ is positive but not equal to 1.

The calculation shows that:

- $r$ is a pure number; it has no units. This is because $r$ is based on standard units.

- $r$ is unaffected by changing the units on either axis. This too is because $r$ is based on standard units.

- $r$ is unaffected by switching the axes. Algebraically, this is because the product of standard units does not depend on which variable is called $x$ and which $y$. Geometrically, switching axes reflects the scatter plot about the line $y=x$, but does not change the amount of clustering nor the sign of the association.

plots.scatter(t['y'], t['x'], s=30, color='r')

plots.xlim(0, 8)

plots.ylim(0, 8)

plots.xlabel('y')

plots.ylabel('x', rotation=0)

The NumPy method corrcoef can be used to calculate $r$. The arguments are an array containing the values of $x$ and another containing the corresponding values of $y$. The program evaluates to a correlation matrix, which is this case is a 2x2 table indexed by $x$ and $y$. The top left element is the correlation between $x$ and $x$, and hence is 1. The top right element is the correlation between $x$ and $y$, which is equal to the correlation between $y$ and $x$ displayed on the bottom left. The bottom right element is 1, the correlation between $y$ and $y$.

np.corrcoef(t['x'], t['y'])

For the purposes of this class, correlation matrices are unnecessary. We will define our own function corr to compute $r$, based on the formula that we used above. The arguments are the name of the table and the labels of the columns containing the two variables. The function returns the mean of the products of standard units, which is $r$.

def corr(table, column_A, column_B):

x = table[column_A]

y = table[column_B]

return np.mean(((x-np.mean(x))/np.std(x))*((y-np.mean(y))/np.std(y)))

Here are examples of calling the function. Notice that it gives the same answer to the correlation between $x$ and $y$ as we got by using np.corrcoef and earlier by direct application of the formula for $r$. Notice also that the correlation between maternal age and birth weight is very low, confirming the lack of any upward or downward trend in the scatter diagram.

corr(t, 'x', 'y')

corr(baby, 'birthwt', 'gest_days')

corr(baby, 'birthwt', 'mat_age')

plots.scatter(baby['mat_age'], baby['birthwt'])

plots.xlabel("mother's age")

plots.ylabel('birth weight')

Properties of Correlation¶

Correlation is a simple and powerful concept, but it is sometimes misused. Before using $r$, it is important to be aware of the following points.

Correlation only measures association. Correlation does not imply causation. Though the correlation between the weight and the math ability of children in a school district may be positive, that does not mean that doing math makes children heavier or that putting on weight improves the children's math skills. Age is a confounding variable: older children are both heavier and better at math than younger children, on average.

Correlation measures linear association. Variables that have strong non-linear association might have very low correlation. Here is an example of variables that have a perfect quadratic relation $y = x^2$ but have correlation equal to 0.

tsq = Table([np.arange(-4, 4.1, 0.5), np.arange(-4, 4.1, 0.5)**2],

['x','y'])

plots.scatter(tsq['x'], tsq['y'])

plots.xlabel('x')

plots.ylabel('y', rotation=0)

corr(tsq, 'x', 'y')

- Outliers can have a big effect on correlation. Here is an example where a scatter plot for which $r$ is equal to 1 is turned into a plot for which $r$ is equal to 0, by the addition of just one outlying point.

t = Table([[1,2,3,4],[1,2,3,4]], ['x','y'])

plots.scatter(t['x'], t['y'], s=30, color='r')

plots.xlim(0, 6)

plots.ylim(-0.5,6)

corr(t, 'x', 'y')

t_outlier = Table([[1,2,3,4,5],[1,2,3,4,0]], ['x','y'])

plots.scatter(t_outlier['x'], t_outlier['y'], s=30, color='r')

plots.xlim(0, 6)

plots.ylim(-0.5,6)

corr(t_outlier, 'x', 'y')

- Correlations based on aggregated data can be misleading. As an example, here are data on the Critical Reading and Math SAT scores in 2014. There is one point for each of the 50 states and one for Washington, D.C. The column

Participation Ratecontains the percent of high school seniors who took the test. The next three columns show the average score in the state on each portion of the test, and the final column is the average of the total scores on the test.

sat2014 = Table.read_table('sat2014.csv').sort('State')

sat2014

The scatter diagram of Math scores versus Critical Reading scores is very tightly clustered around a straight line; the correlation is close to 0.985.

plots.scatter(sat2014['Critical Reading'], sat2014['Math'])

plots.xlabel('Critical Reading')

plots.ylabel('Math')

corr(sat2014, 'Critical Reading', 'Math')

It is important to note that this does not reflect the strength of the relation between the Math and Critical Reading scores of students. States don't take tests – students do. The data in the table have been created by lumping all the students in each state into a single point at the average values of the two variables in that state. But not all students in the state will be at that point, as students vary in their performance. If you plot a point for each student instead of just one for each state, there will be a cloud of points around each point in the figure above. The overall picture will be more fuzzy. The correlation between the Math and Critical Reading scores of the students will be lower than the value calculated based on state averages.

Correlations based on aggregates and averages are called ecological correlations and are frequently reported. As we have just seen, they must be interpreted with care.

Serious or tongue-in-cheek?¶

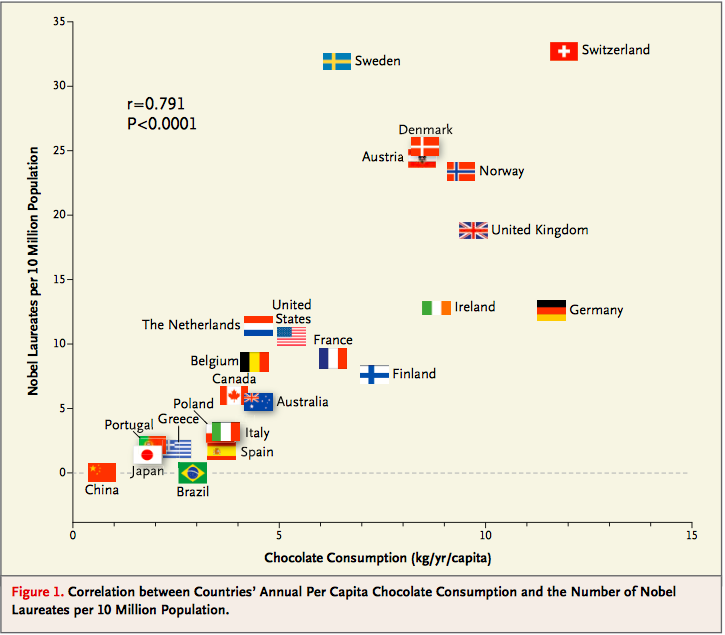

In 2012, a paper in the respected New England Journal of Medicine examined the relation between chocolate consumption and Nobel Prizes in a group of countries. The Scientific American responded seriously; others were more relaxed. The paper included the following graph:

See what you think about the analysis and also about the Reuters report that said, "The p-value Messerli calculated was 0.0001. 'This means that the odds of this being due to chance are less than 1 in 10,000," he said."

Regression

The regression line¶

The concepts of correlation and the "best" straight line through a scatter plot were developed in the late 1800's and early 1900's. The pioneers in the field were Sir Francis Galton, who was a cousin of Charles Darwin, and Galton's protégé Karl Pearson. Galton was interested in eugenics, and was a meticulous observer of the physical traits of parents and their offspring. Pearson, who had greater expertise than Galton in mathematics, helped turn those observations into the foundations of mathematical statistics.

The scatter plot below is of a famous dataset collected by Pearson and his colleagues in the early 1900's. It consists of the heights, in inches, of 1,078 pairs of fathers and sons.

The data are contained in the table heights, in the columns father and son respectively. In the previous section, we saw how to use the table method scatter to draw scatter plots. Here, the method scatter in the pyplot module of matplotlib (imported as plots) is used for the same purpose. The first argument of scatter is a array containing the variable on the horizontal axis. The second argument contains the variable on the vertical axis. The optional argument s=10 sets the size of the points; the default value is s=20.

# Scatter plot using matplotlib method

heights = Table.read_table('heights.csv')

plots.scatter(heights['father'], heights['son'], s=10)

plots.xlabel("father's height")

plots.ylabel("son's height")

Notice the familiar football shape. This is characteristic of variable pairs that follow a bivariate normal distribution: the scatter plot is oval, the distribution of each variable is roughly normal, and the distribution of the variable in each vertical and horizontal strip is roughly normal as well.

The correlation between the heights of the fathers and sons is about 0.5.

r = corr(heights, 'father', 'son')

r

The regression effect¶

The figure below shows the scatter plot of the data when both variables are measured in standard units. As we saw earlier, the red line of equal standard units is too steep to serve well as the line of estimates of $y$ based on $x$. Rather, the estimates are on the green line, which is flatter and picks off the centers of the vertical strips.

This flattening was noticed by Galton, who had been hoping that exceptionally tall fathers would have sons who were just as exceptionally tall. However, the data were clear, and Galton realized that the tall fathers have sons who are not quite as exceptionally tall, on average. Frustrated, Galton called this phenomenon "regression to mediocrity." Because of this, the line of best fit through a scatter plot is called the regression line.

Galton also noticed that exceptionally short fathers had sons who were somewhat taller relative to their generation, on average. In general, individuals who are away from average on one variable are expected to be not quite as far away from average on the other. This is called the regression effect.

# The regression effect

f_su = (heights['father']-np.mean(heights['father']))/np.std(heights['father'])

s_su = (heights['son']-np.mean(heights['son']))/np.std(heights['son'])

plots.scatter(f_su, s_su, s=10)

plots.plot([-4, 4], [-4, 4], color='r', lw=1)

plots.plot([-4, 4], [-4*r, 4*r], color='g', lw=1)

plots.axes().set_aspect('equal')

The Table method scatter can be used with the option fit_line=True to draw the regression line through a scatter plot.

# Plotting the regression line, using Table

heights.scatter('father', fit_line=True)

plots.xlabel("father's height")

plots.ylabel("son's height")

Karl Pearson used the observation of the regression effect in the data above, as well as in other data provided by Galton, to develop the formal calculation of the correlation coefficient $r$. That is why $r$ is sometimes called Pearson's correlation.

The equation of the regression line¶

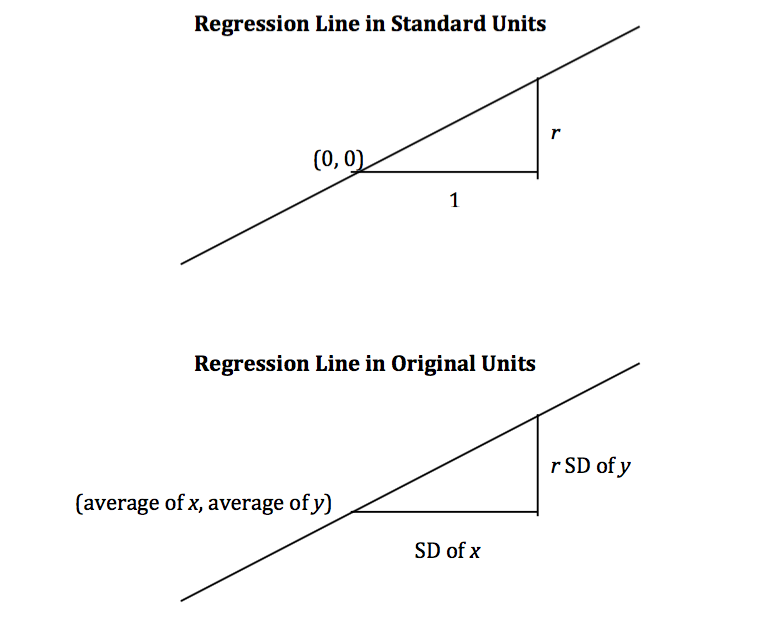

As we saw in the last section for football shaped scatter plots, when the variables $x$ and $y$ are measured in standard units, the best straight line for estimating $y$ based on $x$ has slope $r$ and passes through the origin. Thus the equation of the regression line can be written as:

$~~~~~~~~~~$ estimate of $y$, in $y$-standard units $~=~$ $r ~ \times$ (the given $x$, in $x$-standard units)

That is, $$ \frac{\mbox{estimate of}~y ~-~\mbox{average of}~y}{\mbox{SD of}~y} ~=~ r \times \frac{\mbox{the given}~x ~-~\mbox{average of}~x}{\mbox{SD of}~x} $$

The equation can be converted into the original units of the data, either by rearranging this equation algebraically, or by labeling some important features of the line both in standard units and in the original units.

It is a remarkable fact of mathematics that what we have observed to be true for football shaped scatter plots turns out to be true for all scatter plots, no matter what they look like.

Regardless of the shape of the scatter plot:

$$ \mbox{slope of the regression line} ~=~ \frac{r \cdot \mbox{SD of}~y}{\mbox{SD of}~x} ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~ $$$$ \mbox{intercept of the regression line} ~=~ \mbox{average of}~y ~-~ \mbox{slope} \cdot \mbox{(average of}~x\mbox{)} $$Calculation of the slope and intercept¶

The NumPy method np.polyfit takes as its first argument an array consisiting of the values of the given variable; its second argument an array consisting of the variable to be estimated; its third argument deg=1 specifies that we are fitting a straight line, that is, a polynomial of degree 1. It evaluates to an array consisting of the slope and the intercept of the regression line.

# Slope and intercept by NumPy method

np.polyfit(heights['father'], heights['son'], deg=1)

It is worth noting that the intercept of approximately 33.89 inches is not intended as an estimate of the height of a son whose father is 0 inches tall. There is no such son and no such father. The intercept is merely a geometric or algebraic quantity that helps define the line. In general, it is not a good idea to extrapolate, that is, to make estimates outside the range of the available data. It is certainly not a good idea to extrapolate as far away as 0 is from the heights of the fathers in the study.

The calculations of the slope and intercept of the regression line are straightforward, so we will set np.polyfit aside and write our own function to compute the two quantities. The function regress takes as its arguments the name of the table, the column label of the given variable, and the column label of the variable to be estimated; it evaluates to an array containing the slope and the intercept of the regression line.

# Slope and intercept of regression line

def regress(table, column_x, column_y):

r = corr(table, column_x, column_y)

reg_slope = r*np.std(table[column_y])/np.std(table[column_x])

reg_int = np.mean(table[column_y]) - reg_slope*np.mean(table[column_x])

return np.array([reg_slope, reg_int])

A call to regress yields the same results as the call to np.polyfit made above.

slope_int_h = regress(heights, 'father', 'son')

slope_int_h

Fitted values¶

We can use the regression line to get an estimate of the height of every son in the data. The estimated values of $y$ are called the fitted values. They all lie on a straight line. To calculate them, take a son's height, multiply it by the slope of the regression line, and add the intercept. In other words, calculate the height of the regression line at the given value of $x$.

# Estimates of sons' heights are on the regression line

heights['fitted value'] = slope_int_h[0]*heights['father'] + slope_int_h[1]

heights

Residuals¶

The amount of error in each of these regression estimates is the difference between the son's height and its estimate. These errors are called residuals. Some residuals are positive. These correspond to points that are above the regression line – points for which the regression line under-estimates $y$. Negative residuals correspond to the line over-estimating values of $y$.

# Error in the regression estimate: Distance between observed value and fitted value

# "Residual"

heights['residual'] = heights['son'] - heights['fitted value']

heights

As with deviations from average, the positive and negative residuals exactly cancel each other out. So the average (and sum) of the residuals is 0.

Error in the regression estimate¶

Though the average residual is 0, each individual residual is not. Some residuals might be quite far from 0. To get a sense of the amount of error in the regression estimate, we will start with a graphical description of the sense in which the regression line is the "best".

Our example is a dataset that has one point for every chapter of the novel "Little Women." The goal is to estimate the number of characters (that is, letters, punctuation marks, and so on) based on the number of periods. Recall that we attempted to do this in the very first lecture of this course.

lw = Table.read_table('little_women.csv')

# One point for each chapter

# Horizontal axis: number of periods

# Vertical axis: number of characters (as in a, b, ", ?, etc; not people in the book)

plots.scatter(lw['Periods'], lw['Characters'])

plots.xlabel('Periods')

plots.ylabel('Characters')

corr(lw, 'Periods', 'Characters')

The scatter plot is remarkably close to linear, and the correlation is more than 0.92.

a = [131, 14431]

b = [231, 20558]

c = [392, 40935]

d = [157, 23524]

def lw_errors(slope, intercept):

xlims = np.array([50, 450])

plots.scatter(lw['Periods'], lw['Characters'])

plots.plot(xlims, slope*xlims + intercept, lw=2)

plots.plot([a[0],a[0]], [a[1], slope*a[0] + intercept], color='r', lw=2)

plots.plot([b[0],b[0]], [b[1], slope*b[0] + intercept], color='r', lw=2)

plots.plot([c[0],c[0]], [c[1], slope*c[0] + intercept], color='r', lw=2)

plots.plot([d[0],d[0]], [d[1], slope*d[0] + intercept], color='r', lw=2)

plots.xlabel('Periods')

plots.ylabel('Characters')

The figure below shows the scatter plot and regression line, with four of the errors marked in red.

# Residuals: Deviations from the regression line

slope_int_lw = regress(lw, 'Periods', 'Characters')

lw_errors(slope_int_lw[0], slope_int_lw[1])

Had we used a different line to create our estimates, the errors would have been different. The picture below shows how big the errors would be if we were to use a particularly silly line for estimation.

# Errors: Deviations from a different line

lw_errors(-100, 50000)

Below is a line that we have used before without saying that we were using a line to create estimates. It is the horizontal line at the value "average of $y$." Suppose you were asked to estimate $y$ and were not told the value of $x$; then you would use the average of $y$ as your estimate, regardless of the chapter. In other words, you would use the flat line below.

Each error that you would make would then be a deviation from average. The rough size of these deviations is the SD of $y$.

In summary, if we use the flat line at the average of $y$ to make our estimates, the estimates will be off by the SD of $y$.

# Errors: Deviations from the flat line at the average of y

lw_errors(0, np.mean(lw['Characters']))

The Method of Least Squares¶

If you use any arbitrary line as your line of estimates, then some of your errors are likely to be positive and others negative. To avoid cancellation when measuring the rough size of the errors, we take the mean of the sqaured errors rather than the mean of the errors themselves. This is exactly analogous to our reason for looking at squared deviations from average, when we were learning how to calculate the SD.

The mean squared error of estimation using a straight line is a measure of roughly how big the squared errors are; taking the square root yields the root mean square error, which is in the same units as $y$.

Here is the second remarkable fact of mathematics in this section: the regression line minimizes the mean squared error of estimation (and hence also the root mean squared error) among all straight lines. That is why the regression line is sometimes called the "least squares line."

Computing the "best" line.

- To get estimates of $y$ based on $x$, you can use any line you want.

- Every line has a mean squared error of estimation.

- "Better" lines have smaller errors.

- The regression line is the unique straight line that minimizes the mean squared error of estimation among all straight lines.

Regression Functions¶

Regression is one of the most commonly used methods in statistics, and we will be using it frequently in the next few sections. It will be helpful to be able to call functions to compute the various quantities connected with regression. The first two of the functions below have already been defined; the rest are defined below.

corr: the correlation coefficientregress: the slope and intercept of the regression linefit: the fitted value at one given value of $x$fitted_values: the fitted values at all the values of $x$ in the dataresiduals: the residualsscatter_fit: scatter plot and regression lineresidual_plot: plot of residuals versus $x$

# Correlation coefficient

def corr(table, column_A, column_B):

x = table[column_A]

y = table[column_B]

x_su = (x-np.mean(x))/np.std(x)

y_su = (y-np.mean(y))/np.std(y)

return np.mean(x_su*y_su)

# Slope and intercept of regression line

def regress(table, column_x, column_y):

r = corr(table, column_x, column_y)

reg_slope = r*np.std(table[column_y])/np.std(table[column_x])

reg_int = np.mean(table[column_y]) - reg_slope*np.mean(table[column_x])

return np.array([reg_slope, reg_int])

# Fitted value; the regression estimate at x=new_x

def fit(table, column_x, column_y, new_x):

slope_int = regress(table, column_x, column_y)

return slope_int[0]*new_x + slope_int[1]

# Fitted values; the regression estimates lie on a straight line

def fitted_values(table, column_x, column_y):

slope_int = regress(table, column_x, column_y)

return slope_int[0]*table[column_x] + slope_int[1]

# Residuals: Deviations from the regression line

def residuals(table, column_x, column_y):

fitted = fitted_values(table, column_x, column_y)

return table[column_y] - fitted

# Scatter plot with fitted (regression) line

def scatter_fit(table, column_x, column_y):

plots.scatter(table[column_x], table[column_y], s=10)

plots.plot(table[column_x], fitted_values(table, column_x, column_y), lw=1, color='green')

plots.xlabel(column_x)

plots.ylabel(column_y)

# A residual plot

def residual_plot(table, column_x, column_y):

plots.scatter(table[column_x], residuals(table, column_x, column_y), s=10)

xm = np.min(table[column_x])

xM = np.max(table[column_x])

plots.plot([xm, xM], [0, 0], color='k', lw=1)

plots.xlabel(column_x)

plots.ylabel('residual')

Residual plots¶

Suppose you have carried out the regression of sons' heights on fathers' heights.

scatter_fit(heights, 'father', 'son')

It is a good idea to then draw a residual plot. This is a scatter plot of the residuals versus the values of $x$. The residual plot of a good regression looks like the one below: a formless cloud with no pattern, centered around the horizontal axis. It shows that there is no discernible non-linear pattern in the original scatter plot.

residual_plot(heights, 'father','son')

Residual plots can be useful for spotting non-linearity in the data, or other features that weaken the regression analysis. For example, consider the SAT data of the previous section, and suppose you try to estimate the Combined score based on Participation Rate.

sat2014 = Table.read_table('sat2014.csv')

sat2014

plots.scatter(sat2014['Participation Rate'], sat2014['Combined'], s=10)

plots.xlabel('Participation Rate')

plots.ylabel('Combined')

The relation between the variables is clearly non-linear, but you might be tempted to fit a straight line anyway, especially if you did not first draw the scatter diagram of the data.

scatter_fit(sat2014, 'Participation Rate', 'Combined')

plots.title("A bad idea")

The points in the scatter plot start out above the regression line, then are consistently below the line, then above, then below. This pattern of non-linearity is more clearly visible in the residual plot.

residual_plot(sat2014, 'Participation Rate', 'Combined')

plots.title('Residual plot of the bad regression')

This residual plot shows a non-linear pattern, and is a signal that linear regression should not have been used for these data.

The rough size of the residuals¶

Let us return to the heights of the fathers and sons, and compare the estimates based on using the regression line and the flat line (in yellow) at the average height of the sons. As noted above, the rough size of the errors made using the flat line is the SD of $y$. Clearly, the regression line does a better job of estimating sons' heights than the flat line does; indeed, it minimizes the mean squared error among all lines. Thus, the rough size of the errors made using the regression line must be smaller that that using the flat line. In other words, the SD of the residuals must be smaller than the overall SD of $y$.

ave_y = np.mean(heights['son'])

scatter_fit(heights, 'father', 'son')

plots.plot([np.min(heights['father']), np.max(heights['father'])], [ave_y, ave_y], lw=2, color='gold')

Here, once again, are the residuals in the estimation of sons' heights based on fathers' heights. Each residual is the difference between the height of a son and his estimated (or "fitted") height.

heights

The average of the residuals is 0. All the negative errors exactly cancel out all the positive errors.

The SD of the residuals is about 2.4 inches, while the overall SD of the sons' heights is about 2.7 inches. As expected, the SD of the residuals is smaller than the overall SD of $y$.

np.std(heights['residual'])

np.std(heights['father'])

Smaller by what factor? Another remarkable fact of mathematics is that no matter what the data look like, the SD of the residuals is $\sqrt{1-r^2}$ times the SD of $y$.

np.std(heights['residual'])/np.std(heights['son'])

np.sqrt(1 - r**2)

Average and SD of the Residuals¶

Regardless of the shape of the scatter plot:

average of the residuals = 0

SD of the residuals $~=~ \sqrt{1 - r^2} \cdot \mbox{SD of}~y$

The residuals are equal to the values of $y$ minus the fitted values. Since the average of the residuals is 0, the average of the fitted values must be equal to the average of $y$.

In the figure below, the fitted values are all on the green line segment. The center of that segment is at the point of averages, consistent with our calculation of the average of the fitted values.

scatter_fit(heights, 'father', 'son')

The SD of the fitted values is visibly smaller than the overall SD of $y$. The fitted values range from about 64 to about 73, whereas the values of $y$ range from about 58 to 77.

So if we take the ratio of the SD of the fitted values to the SD of $y$, we expect to get a number between 0 and 1. And indeed we do: a very special number between 0 and 1.

np.std(heights['fitted value'])/np.std(heights['son'])

r

Here is the final remarkable fact of mathematics in this section:

Average and SD of the Fitted Values¶

Regardless of the shape of the scatter plot:

average of the fitted values = the average of $y$

SD of the fitted values $~=~ |r| \cdot$ SD of $y$

Notice the absolute value of $r$ in the formula above. For the heights of fathers and sons, the correlation is positive and so there is no difference between using $r$ and using its absolute value. However, the result is true for variables that have negative correlation as well, provided we are careful to use the absolute value of $r$ instead of $r$.

Bootstrap for Regression

Assumptions of randomness: a "regression model"¶

In the last section, we developed the concepts of correlation and regression as ways to describe data. We will now see how these concepts can become powerful tools for inference, when used appropriately.

Questions of inference may arise if we believe that a scatter plot reflects the underlying relation between the two variables being plotted but does not specify the relation completely. For example, a scatter plot of the heights of fathers and sons shows us the precise relation between the two variables in one particular sample of men; but we might wonder whether that relation holds true, or almost true, among all fathers and sons in the population from which the sample was drawn, or indeed among fathers and sons in general.

As always, inferential thinking begins with a careful examination of the assumptions about the data. Sets of assumptions are known as models. Sets of assumptions about randomness in roughly linear scatter plots are called regression models.

In brief, such models say that the underlying relation between the two variables is perfectly linear; this straight line is the signal that we would like to identify. However, we are not able to see the line clearly. What we see are points that are scattered around the line. In each of the points, the signal has been contaminated by random noise. Our inferential goal, therefore, is to separate the signal from the noise.

In greater detail, the regression model specifies that the points in the scatter plot are generated at random as follows.

- The relation between $x$ and $y$ is perfectly linear. We cannot see this "true line" but Tyche can. She is the Goddess of Chance.

- Tyche creates the scatter plot by taking points on the line and pushing them off the line vertically, either above or below, as follows:

- For each $x$, Tyche finds the corresponding point on the true line, and then adds an error.

- The errors are drawn at random with replacement from a population of errors that has a normal distribution with mean 0.

- Tyche creates a point whose horizontal coordinate is $x$ and whose vertical coordinate is "the height of the true line at $x$, plus the error".

- Finally, Tyche erases the true line from the scatter, and shows us just the scatter plot of her points.

Based on this scatter plot, how should we estimate the true line? The best line that we can put through a scatter plot is the regression line. So the regression line is a natural estimate of the true line.

The simulation below shows how close the regression line is to the true line. The first panel shows how Tyche generates the scatter plot from the true line; the second show the scatter plot that we see; the third shows the regression line through the plot; and the fourth shows both the regression line and the true line.

Run the simulation a few times, with different values for the slope and intercept of the true line, and varying sample sizes. You will see that the regression line is a good estimate of the true line if the sample size is moderately large.

def draw_and_compare(true_slope, true_int, sample_size):

x = np.random.normal(50, 5, sample_size)

xlims = np.array([np.min(x), np.max(x)])

eps = np.random.normal(0, 6, sample_size)

y = (true_slope*x + true_int) + eps

tyche = Table([x,y],['x','y'])

plots.figure(figsize=(6, 16))

plots.subplot(4, 1, 1)

plots.scatter(tyche['x'], tyche['y'], s=10)

plots.plot(xlims, true_slope*xlims + true_int, lw=1, color='gold')

plots.title('What Tyche draws')

plots.subplot(4, 1, 2)

plots.scatter(tyche['x'],tyche['y'], s=10)

plots.title('What we get to see')

plots.subplot(4, 1, 3)

scatter_fit(tyche, 'x', 'y')

plots.xlabel("")

plots.ylabel("")

plots.title('Regression line: our estimate of true line')

plots.subplot(4, 1, 4)

scatter_fit(tyche, 'x', 'y')

xlims = np.array([np.min(tyche['x']), np.max(tyche['x'])])

plots.plot(xlims, true_slope*xlims + true_int, lw=1, color='gold')

plots.title("Regression line and true line")

# Tyche's true line,

# the points she creates,

# and our estimate of the true line.

# Arguments: true slope, true intercept, number of points

draw_and_compare(3, -5, 20)

In reality, of course, we are not Tyche, and we will never see the true line. What the simulation shows that if the regression model looks plausible, and we have a large sample, then regression line is a good approximation to the true line.

Here is an example where regression model can be used to make predictions.

The data are a subset of the information gathered in a randomized controlled trial about treatments for Hodgkin's disease. Hodgkin's disease is a cancer that typically affects young people. The disease is curable but the treatment is very harsh. The purpose of the trial was to come up with dosage that would cure the cancer but minimize the adverse effects on the patients.

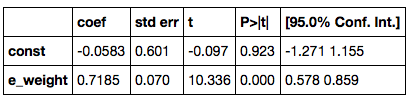

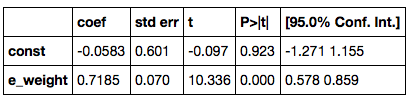

This table hodgkins contains data on the effect that the treatment had on the lungs of 22 patients. The columns are:

- Height in cm

- A measure of radiation to the mantle (neck, chest, under arms)

- A measure of chemotherapy

- A score of the health of the lungs at baseline, that is, at the start of the treatment; higher scores correspond to more healthy lungs

- The same score of the health of the lungs, 15 months after treatment

hodgkins = Table.read_table('hodgkins.csv')

hodgkins.show()

It is evident that the patients' lungs were less healthy 15 months after the treatment than at baseline. At 36 months, they did recover most of their lung function, but those data are not part of this table.

The scatter plot below shows that taller patients had higher scores at 15 months.

scatter_fit(hodgkins, 'height', 'month15')

Prediction¶

The scatter plot looks roughly linear. Let us assume that the regression model holds. Under that assumption, the regression line can be used to predict the 15-month score of a new patient, based on the patient's height. The prediction will be good provided the assumptions of the regression model are justified for these data, and provided the new patient is similar to those in the study.

The function regress gives us the slope and intercept of the regression line. To predict the 15-month score of a new patient whose height is $x$ cm, we use the following calculation:

Predicted 15-month score of a patient who is $x$ inches tall $~=~$ slope$\;\times\;x ~+~$ intercept

The predicted 15-month score of a patient who is 173 cm tall is 83.66 cm, and that of a patient who is 163 cm tall is 73.69 cm.

# slope and intercept

slope_int = regress(hodgkins, 'height', 'month15')

slope_int

# New patient, 173 cm tall

# Predicted 15-month score:

slope_int[0]*173 + slope_int[1]

# New patient, 163 cm tall

# Predicted 15-month score:

slope_int[0]*163 + slope_int[1]

The Variability of the Prediction¶

As data scientists working under the regression model, we know that the sample might have been different. Had the sample been different, the regression line would have been different too, and so would our prediction. To see how good our prediction is, we must get a sense of how variable the prediction can be.

One way to do this would be by generating new random samples of points and making a prediction based on each new sample. To generate new samples, we can bootstrap the scatter plot.

Specifically, we can simulate new samples by random sampling with replacement from the original scatter plot, as many times as there are points in the scatter.

Here is the original scatter diagram from the sample, and four replications of the bootstrap resampling procedure. Notice how the resampled scatter plots are in general a little more sparse than the original. That is because some of the original point do not get selected in the samples.

plots.figure(figsize=(6, 16))

plots.subplot(5, 1, 1)

plots.scatter(hodgkins['height'], hodgkins['month15'])

plots.title('Original sample')

plots.subplot(5,1,2)

rep = hodgkins.sample(with_replacement=True)

plots.scatter(rep['height'], rep['month15'])

plots.title('Bootstrap sample 1')

plots.subplot(5, 1, 3)

rep = hodgkins.sample(with_replacement=True)

plots.scatter(rep['height'], rep['month15'])

plots.title('Bootstrap sample 2')

plots.subplot(5, 1, 4)

rep = hodgkins.sample(with_replacement=True)

plots.scatter(rep['height'], rep['month15'])

plots.title('Bootstrap sample 3')

plots.subplot(5, 1, 5)

rep = hodgkins.sample(with_replacement=True)

plots.scatter(rep['height'], rep['month15'])

plots.title('Bootstrap sample 4')

The next step is to fit the regression line to the scatter plot in each replication, and make a prediction based on each line. The figure below shows 10 such lines, and the corresponding predicted 15-month scores for a patient whose height is 173 cm.

x = 173

h = hodgkins['height']

m15 = hodgkins['month15']

xlims = np.array([np.min(h), np.max(h)])

results = Table([[],[]], ['slope','intercept'])

for i in range(10):

rep = hodgkins.sample(with_replacement=True)

results.append(Table.from_rows([regress(rep, 'height','month15')], ['slope','intercept']))

results['prediction at x='+str(x)] = results['slope']*x + results['intercept']

left = xlims[0]*results['slope'] + results['intercept']

right = xlims[1]*results['slope'] + results['intercept']

fit_x = x*results['slope'] + results['intercept']

for i in range(10):

plots.plot(xlims, np.array([left[i], right[i]]), lw=1)

plots.scatter(x, fit_x[i])

The table below shows the slope and intercept of each of the 10 lines, along with the prediction.

results

Bootstrap prediction interval¶

If we increase the number of repetitions of the resampling process, we can generate an empirical histogram of the predictions. This will allow us to create a prediction interval, by the same methods that we used earlier to create bootstrap confidence intervals for numerical parameters.

# Bootstrap prediction at new_x

# Hodgkin's disease table, x = height, y = month15

def bootstrap_ht_m15(new_x, repetitions):

# For each repetition:

# Bootstrap the scatter;

# get the regression prediction at new_x;

# augment the predictions list

pred = []

for i in range(repetitions):

bootstrap_sample = hodgkins.sample(with_replacement=True)

p = fit(bootstrap_sample, 'height', 'month15', new_x)

pred.append(p)

# Prediction based on original sample

obs = fit(hodgkins, 'height', 'month15', new_x)

# Display results

pred = Table([pred], ['pred'])

pred.hist(bins=np.arange(55,100,2), normed=True)

plots.ylim(0, 0.1)

plots.xlabel('predictions at x='+str(new_x))

print('Height of regression line at x='+str(new_x)+':', obs)

print('Approximate 95%-confidence interval:')

print((pred.percentile(2.5).rows[0][0], pred.percentile(97.5).rows[0][0]))

bootstrap_ht_m15(173, 5000)

The figure above shows a bootstrap empirical histogram of the predicted 15-month score of a patient who is 173 cm tall. The empirical distribution is roughly normal. An approximate 95% prediction interval of scores ranges from about 74.7 to about 91.4. The prediction based on the original sample was about 83.6, which is close to the center of the interval.

The figure below shows the corresponding figure for a patient whose height is 163 cm. Notice that while this empirical histogram too is roughly bell shaped, the approximation is not as good as the one above for a given height of 173 cm. Also, the distribution is more spread out than the one above. That is because 163 cm is near the low end of the heights of the sampled patients, and there is not much data around a height of 163 cm. By contrast, several patients were 173 cm tall. Therefore we can make better predictions for patients who are 173 cm tall than for patients whose height is 163 cm.

bootstrap_ht_m15(163, 5000)

The function bootstrap_pred returns an approximate 95% prediction interval and a bootstrap empirical distribution of the prediction. Its arguments are:

- the name of the table containing the data

- the label of the column containing the known variable, $x$

- the label of the column containing the variable to be predicted, $y$

- the value of $x$ at which to make the prediction

- the number of repetitions of the bootstrap resampling procedure

In every repetition, the function draws a bootstrap sample and finds the fitted value at the specified value of $x$. Below, the function has been called to make a prediction at a height of 180 cm.

def bootstrap_pred(table, column_x, column_y, new_x, repetitions):

# For each repetition:

# Bootstrap the scatter;

# get the regression prediction at new_x

# augment the predictions list

pred = []

for i in range(repetitions):

bootstrap_sample = table.sample(with_replacement=True)

p = fit(bootstrap_sample, column_x, column_y, new_x)

pred.append(p)

# Prediction based on regression line through original sample

obs = fit(table, column_x, column_y, new_x)

# Display results

pred = Table([pred], ['pred'])

pred.hist(bins=20, normed=True)

plots.xlabel('predictions at x='+str(new_x))

print('Height of regression line at x='+str(new_x)+':', obs)

print('Approximate 95%-confidence interval:')

print((pred.percentile(2.5).rows[0][0], pred.percentile(97.5).rows[0][0]))

bootstrap_pred(hodgkins, 'height', 'month15', 180, 5000)

Is there a linear trend at all?¶

The bootstrap method can also be used to see whether there is any correlation between two variables.

From the scatter plot below, it appears that there is little or no correlation between the amont of radiation patients received and their 15-month score

scatter_fit(hodgkins, 'rad', 'month15')

To see whether this lack of linear relation is true, we can create a bootstrap confidence interval for the slope of the true line, analogously to the confidence intervals we constructed in earlier sections using the bootstrap percentile method.

The figure below shows the empirical distribution of the slopes and an approximate 95% confidence interval for the true slope. The interval runs from about -0.05 to 0.05, and contains the slope of 0. There is no strong reason to doubt that the slope of the true line is around 0.

def bootstrap_slope(table, column_x, column_y, repetitions):

# For each repetition:

# Bootstrap the scatter, get the slope of the regression line,

# augment the results list

slopes = []

for i in range(repetitions):

bootstrap_sample = table.sample(with_replacement=True)

slopes.append(regress(bootstrap_sample, column_x, column_y)[0])

# Slope of the regression line from the original sample

obs = regress(table, column_x, column_y)[0]

# Display results

slopes = Table([slopes],['slopes'])

slopes.hist(bins=20, normed=True)

plots.xlabel('slopes')

print('Slope of regression line:', obs)

print('Approximate 95%-confidence interval:')

print((slopes.percentile(2.5).rows[0][0], slopes.percentile(97.5).rows[0][0]))

bootstrap_slope(hodgkins, 'rad', 'month15', 5000)

Classification

This section will discuss machine learning. Machine learning is a class of techniques for automatically finding patterns in data and using it to draw inferences or make predictions. We're going to focus on a particular kind of machine learning, namely, classification.

Classification is about learning how to make predictions from past examples: we're given some examples where we have been told what the correct prediction was, and we want to learn from those examples how to make good predictions in the future. Here are a few applications where classification is used in practice:

For each order Amazon receives, Amazon would like to predict: is this order fraudulent? They have some information about each order (e.g., its total value, whether the order is being shipped to an address this customer has used before, whether the shipping address is the same as the credit card holder's billing address). They have lots of data on past orders, and they know whether which of those past orders were fraudulent and which weren't. They want to learn patterns that will help them predict, as new orders arrive, whether those new orders are fraudulent.

Online dating sites would like to predict: are these two people compatible? Will they hit it off? They have lots of data on which matches they've suggested to their customers in the past, and they have some idea which ones were successful. As new customers sign up, they'd like to predict make predictions about who might be a good match for them.

Doctors would like to know: does this patient have cancer? Based on the measurements from some lab test, they'd like to be able to predict whether the particular patient has cancer. They have lots of data on past patients, including their lab measurements and whether they ultimately developed cancer, and from that, they'd like to try to infer what measurements tend to be characteristic of cancer (or non-cancer) so they can diagnose future patients accurately.

Politicians would like to predict: are you going to vote for them? This will help them focus fundraising efforts on people who are likely to support them, and focus get-out-the-vote efforts on voters who will vote for them. Public databases and commercial databases have a lot of information about most people: e.g., whether they own a home or rent; whether they live in a rich neighborhood or poor neighborhood; their interests and hobbies; their shopping habits; and so on. And political campaigns have surveyed some voters and found out who they plan to vote for, so they have some examples where the correct answer is known. From this data, the campaigns would like to find patterns that will help them make predictions about all other potential voters.

All of these are classification tasks. Notice that in each of these examples, the prediction is a yes/no question -- we call this binary classification, because there are only two possible predictions. In a classification task, we have a bunch of observations. Each observation represents a single individual or a single situation where we'd like to make a prediction. Each observation has multiple attributes, which are known (e.g., the total value of the order; voter's annual salary; and so on). Also, each observation has a class, which is the answer to the question we care about (e.g., yes or no; fraudulent or not; etc.).

For instance, with the Amazon example, each order corresponds to a single observation. Each observation has several attributes (e.g., the total value of the order, whether the order is being shipped to an address this customer has used before, and so on). The class of the observation is either 0 or 1, where 0 means that the order is not fraudulent and 1 means that the order is fraudulent. Given the attributes of some new order, we are trying to predict its class.

Classification requires data. It involves looking for patterns, and to find patterns, you need data. That's where the data science comes in. In particular, we're going to assume that we have access to training data: a bunch of observations, where we know the class of each observation. The collection of these pre-classified observations is also called a training set. A classification algorithm is going to analyze the training set, and then come up with a classifier: an algorithm for predicting the class of future observations.

Note that classifiers do not need to be perfect to be useful. They can be useful even if their accuracy is less than 100%. For instance, if the online dating site occasionally makes a bad recommendation, that's OK; their customers already expect to have to meet many people before they'll find someone they hit it off with. Of course, you don't want the classifier to make too many errors -- but it doesn't have to get the right answer every single time.

Chronic kidney disease¶

Let's work through an example. We're going to work with a data set that was collected to help doctors diagnose chronic kidney disease (CKD). Each row in the data set represents a single patient who was treated in the past and whose diagnosis is known. For each patient, we have a bunch of measurements from a blood test. We'd like to find which measurements are most useful for diagnosing CKD, and develop a way to classify future patients as "has CKD" or "doesn't have CKD" based on their blood test results.

Let's load the data set into a table and look at it.

ckd = Table.read_table('ckd.csv')

ckd

We have data on 158 patients. There are an awful lot of attributes here. The column labelled "Class" indicates whether the patient was diagnosed with CKD: 1 means they have CKD, 0 means they do not have CKD.

Let's look at two columns in particular: the hemoglobin level (in the patient's blood), and the blood glucose level (at a random time in the day; without fasting specially for the blood test). We'll draw a scatter plot, to make it easy to visualize this. Red dots are patients with CKD; blue dots are patients without CKD. What test results seem to indicate CKD?

plots.figure(figsize=(8,8))

plots.scatter(ckd['Hemoglobin'], ckd['Blood Glucose Random'], c=ckd['Class'], s=30)

plots.xlabel('Hemoglobin')

plots.ylabel('Glucose')

Suppose Alice is a new patient who is not in the data set. If I tell you Alice's hemoglobin level and blood glucose level, could you predict whether she has CKD? It sure looks like it! You can see a very clear pattern here: points in the lower-right tend to represent people who don't have CKD, and the rest tend to be folks with CKD. To a human, the pattern is obvious. But how can we program a computer to automatically detect patterns such as this one?

Well, there are lots of kinds of patterns one might look for, and lots of algorithms for classification. But I'm going to tell you about one that turns out to be surprisingly effective. It is called nearest neighbor classification. Here's the idea. If we have Alice's hemoglobin and glucose numbers, we can put her somewhere on this scatterplot; the hemoglobin is her x-coordinate, and the glucose is her y-coordinate. Now, to predict whether she has CKD or not, we find the nearest point in the scatterplot and check whether it is red or blue; we predict that Alice should receive the same diagnosis as that patient.

In other words, to classify Alice as CKD or not, we find the patient in the training set who is "nearest" to Alice, and then use that patient's diagnosis as our prediction for Alice. The intuition is that if two points are near each other in the scatterplot, then the corresponding measurements are pretty similar, so we might expect them to receive the same diagnosis (more likely than not). We don't know Alice's diagnosis, but we do know the diagnosis of all the patients in the training set, so we find the patient in the training set who is most similar to Alice, and use that patient's diagnosis to predict Alice's diagnosis.

The scatterplot suggests that this nearest neighbor classifier should be pretty accurate. Points in the lower-right will tend to receive a "no CKD" diagnosis, as their nearest neighbor will be a blue point. The rest of the points will tend to receive a "CKD" diagnosis, as their nearest neighbor will be a red point. So the nearest neighbor strategy seems to capture our intuition pretty well, for this example.

However, the separation between the two classes won't always be quite so clean. For instance, suppose that instead of hemoglobin levels we were to look at white blood cell count. Look at what happens:

plots.figure(figsize=(8,8))

plots.scatter(ckd['White Blood Cell Count'], ckd['Blood Glucose Random'], c=ckd['Class'], s=30)

plots.xlabel('White Blood Cell Count')

plots.ylabel('Glucose')

As you can see, non-CKD individuals are all clustered in the lower-left. Most of the patients with CKD are above or to the right of that cluster... but not all. There are some patients with CKD who are in the lower left of the above figure (as indicated by the handful of red dots scattered among the blue cluster). What this means is that you can't tell for certain whether someone has CKD from just these two blood test measurements.

If we are given Alice's glucose level and white blood cell count, can we predict whether she has CKD? Yes, we can make a prediction, but we shouldn't expect it to be 100% accurate. Intuitively, it seems like there's a natural strategy for predicting: plot where Alice lands in the scatter plot; if she is in the lower-left, predict that she doesn't have CKD, otherwise predict she has CKD. This isn't perfect -- our predictions will sometimes be wrong. (Take a minute and think it through: for which patients will it make a mistake?) As the scatterplot above indicates, sometimes people with CKD have glucose and white blood cell levels that look identical to those of someone without CKD, so any classifier is inevitably going to make the wrong prediction for them.

Can we automate this on a computer? Well, the nearest neighbor classifier would be a reasonable choice here too. Take a minute and think it through: how will its predictions compare to those from the intuitive strategy above? When will they differ? Its predictions will be pretty similar to our intuitive strategy, but occasionally it will make a different prediction. In particular, if Alice's blood test results happen to put her right near one of the red dots in the lower-left, the intuitive strategy would predict "not CKD", whereas the nearest neighbor classifier will predict "CKD".

There is a simple generalization of the nearest neighbor classifier that fixes this anomaly. It is called the k-nearest neighbor classifier. To predict Alice's diagnosis, rather than looking at just the one neighbor closest to her, we can look at the 3 points that are closest to her, and use the diagnosis for each of those 3 points to predict Alice's diagnosis. In particular, we'll use the majority value among those 3 diagnoses as our prediction for Alice's diagnosis. Of course, there's nothing special about the number 3: we could use 4, or 5, or more. (It's often convenient to pick an odd number, so that we don't have to deal with ties.) In general, we pick a number $k$, and our predicted diagnosis for Alice is based on the $k$ patients in the training set who are closest to Alice. Intuitively, these are the $k$ patients whose blood test results were most similar to Alice, so it seems reasonable to use their diagnoses to predict Alice's diagnosis.

The $k$-nearest neighbor classifier will now behave just like our intuitive strategy above.

Decision boundary¶

Sometimes a helpful way to visualize a classifier is to map the region of space where the classifier would predict 'CKD', and the region of space where it would predict 'not CKD'. We end up with some boundary between the two, where points on one side of the boundary will be classified 'CKD' and points on the other side will be classified 'not CKD'. This boundary is called the decision boundary. Each different classifier will have a different decision boundary; the decision boundary is just a way to visualize what criteria the classifier is using to classify points.

Banknote authentication¶

Let's do another example. This time we'll look at predicting whether a banknote (e.g., a \$20 bill) is counterfeit or legitimate. Researchers have put together a data set for us, based on photographs of many individual banknotes: some counterfeit, some legitimate. They computed a few numbers from each image, using techniques that we won't worry about for this course. So, for each banknote, we know a few numbers that were computed from a photograph of it as well as its class (whether it is counterfeit or not). Let's load it into a table and take a look.

banknotes = Table.read_table('banknote.csv')

banknotes

Let's look at whether the first two numbers tell us anything about whether the banknote is counterfeit or not. Here's a scatterplot:

plots.figure(figsize=(8,8))

plots.scatter(banknotes['WaveletVar'], banknotes['WaveletCurt'], c=banknotes['Class'])

Pretty interesting! Those two measurements do seem helpful for predicting whether the banknote is counterfeit or not. However, in this example you can now see that there is some overlap between the blue cluster and the red cluster. This indicates that there will be some images where it's hard to tell whether the banknote is legitimate based on just these two numbers. Still, you could use a $k$-nearest neighbor classifier to predict the legitimacy of a banknote.

Take a minute and think it through: Suppose we used $k=11$ (say). What parts of the plot would the classifier get right, and what parts would it make errors on? What would the decision boundary look like?

The patterns that show up in the data can get pretty wild. For instance, here's what we'd get if used a different pair of measurements from the images:

plots.figure(figsize=(8,8))

plots.scatter(banknotes['WaveletSkew'], banknotes['Entropy'], c=banknotes['Class'])

There does seem to be a pattern, but it's a pretty complex one. Nonetheless, the $k$-nearest neighbors classifier can still be used and will effectively "discover" patterns out of this. This illustrates how powerful machine learning can be: it can effectively take advantage of even patterns that we would not have anticipated, or that we would have thought to "program into" the computer.

Multiple attributes¶

So far I've been assuming that we have exactly 2 attributes that we can use to help us make our prediction. What if we have more than 2? For instance, what if we have 3 attributes?

Here's the cool part: you can use the same ideas for this case, too. All you have to do is make a 3-dimensional scatterplot, instead of a 2-dimensional plot. You can still use the $k$-nearest neighbors classifier, but now computing distances in 3 dimensions instead of just 2. It just works. Very cool!

In fact, there's nothing special about 2 or 3. If you have 4 attributes, you can use the $k$-nearest neighbors classifier in 4 dimensions. 5 attributes? Work in 5-dimensional space. And no need to stop there! This all works for arbitrarily many attributes; you just work in a very high dimensional space. It gets wicked-impossible to visualize, but that's OK. The computer algorithm generalizes very nicely: all you need is the ability to compute the distance, and that's not hard. Mind-blowing stuff!

For instance, let's see what happens if we try to predict whether a banknote is counterfeit or not using 3 of the measurements, instead of just 2. Here's what you get:

ax = plots.figure(figsize=(8,8)).add_subplot(111, projection='3d')

ax.scatter(banknotes['WaveletSkew'], banknotes['WaveletVar'], banknotes['WaveletCurt'], c=banknotes['Class'])

Awesome! With just 2 attributes, there was some overlap between the two clusters (which means that the classifier was bound to make some mistakes for pointers in the overlap). But when we use these 3 attributes, the two clusters have almost no overlap. In other words, a classifier that uses these 3 attributes will be more accurate than one that only uses the 2 attributes.

This is a general phenomenom in classification. Each attribute can potentially give you new information, so more attributes sometimes helps you build a better classifier. Of course, the cost is that now we have to gather more information to measure the value of each attribute, but this cost may be well worth it if it significantly improves the accuracy of our classifier.

To sum up: you now know how to use $k$-nearest neighbor classification to predict the answer to a yes/no question, based on the values of some attributes, assuming you have a training set with examples where the correct prediction is known. The general roadmap is this:

- identify some attributes that you think might help you predict the answer to the question;

- gather a training set of examples where you know the values of the attributes as well as the correct prediction;

- to make predictions in the future, measure the value of the attributes and then use $k$-nearest neighbor classification to predict the answer to the question.

Breast cancer diagnosis¶

Now I want to do a more extended example based on diagnosing breast cancer. I was inspired by Brittany Wenger, who won the Google national science fair three years ago as a 17-year old high school student. Here's Brittany:

Brittany's science fair project was to build a classification algorithm to diagnose breast cancer. She won grand prize for building an algorithm whose accuracy was almost 99%.

Let's see how well we can do, with the ideas we've learned in this course.

So, let me tell you a little bit about the data set. Basically, if a woman has a lump in her breast, the doctors may want to take a biopsy to see if it is cancerous. There are several different procedures for doing that. Brittany focused on fine needle aspiration (FNA), because it is less invasive than the alternatives. The doctor gets a sample of the mass, puts it under a microscope, takes a picture, and a trained lab tech analyzes the picture to determine whether it is cancer or not. We get a picture like one of the following:

Unfortunately, distinguishing between benign vs malignant can be tricky. So, researchers have studied using machine learning to help with this task. The idea is that we'll ask the lab tech to analyze the image and compute various attributes: things like the typical size of a cell, how much variation there is among the cell sizes, and so on. Then, we'll try to use this information to predict (classify) whether the sample is malignant or not. We have a training set of past samples from women where the correct diagnosis is known, and we'll hope that our machine learning algorithm can use those to learn how to predict the diagnosis for future samples.

We end up with the following data set. For the "Class" column, 1 means malignant (cancer); 0 means benign (not cancer).

patients = Table.read_table('breast-cancer.csv')

patients = patients.drop('ID')

patients

So we have 9 different attributes. I don't know how to make a 9-dimensional scatterplot of all of them, so I'm going to pick two and plot them:

plots.figure(figsize=(8,8))

plots.scatter(patients['Bland Chromatin'], patients['Single Epithelial Cell Size'], c=patients['Class'], s=30)

plots.xlabel('Bland Chromatin')

plots.ylabel('Single Epithelial Cell Size')

Oops. That plot is utterly misleading, because there are a bunch of points that have identical values for both the x- and y-coordinates. To make it easier to see all the data points, I'm going to add a little bit of random jitter to the x- and y-values. Here's how that looks:

def randomize_column(a):

return a + np.random.normal(0.0, 0.09, size=len(a))

plots.figure(figsize=(8,8))

plots.scatter(randomize_column(patients['Bland Chromatin']), randomize_column(patients['Single Epithelial Cell Size']), c=patients['Class'], s=30)

plots.xlabel('Bland Chromatin (jittered)')

plots.ylabel('Single Epithelial Cell Size (jittered)')

For instance, you can see there are lots of samples with chromatin = 2 and epithelial cell size = 2; all non-cancerous.

Keep in mind that the jittering is just for visualization purposes, to make it easier to get a feeling for the data. When we want to work with the data, we'll use the original (unjittered) data.

Applying the k-nearest neighbor classifier to breast cancer diagnosis¶

We've got a data set. Let's try out the $k$-nearest neighbor classifier and see how it does. This is going to be great.

We're going to need an implementation of the $k$-nearest neighbor classifier. In practice you would probably use an existing library, but it's simple enough that I'm going to imeplment it myself.

The first thing we need is a way to compute the distance between two points. How do we do this? In 2-dimensional space, it's pretty easy. If we have a point at coordinates $(x_0,y_0)$ and another at $(x_1,y_1)$, the distance between them is

$$D = \sqrt{(x_0-x_1)^2 + (y_0-y_1)^2}.$$(Where did this come from? It comes from the Pythogorean theorem: we have a right triangle with side lengths $x_0-x_1$ and $y_0-y_1$, and we want to find the length of the diagonal.)

In 3-dimensional space, the formula is

$$D = \sqrt{x_0-x_1)^2 + (y_0-y_1)^2 + (z_0-z_1)^2}.$$In $k$-dimensional space, things are a bit harder to visualize, but I think you can see how the formula generalized: we sum up the squares of the differences between each individual coordinate, and then take the square root of that. Let's implement a function to compute this distance function for us:

def distance(pt1, pt2):

tot = 0

for i in range(len(pt1)):

tot = tot + (pt1[i] - pt2[i])**2

return math.sqrt(tot)

Next, we're going to write some code to implement the classifier. The input is a patient p who we want to diagnose. The classifier works by finding the $k$ nearest neighbors of p from the training set. So, our approach will go like this:

Find the closest $k$ neighbors of

p, i.e., the $k$ patients from the training set that are most similar top.Look at the diagnoses of those $k$ neighbors, and take the majority vote to find the most-common diagnosis. Use that as our predicted diagnosis for

p.

So that will guide the structure of our Python code.

To implement the first step, we will compute the distance from each patient in the training set to p, sort them by distance, and take the $k$ closest patients in the training set. The code will make a copy of the table, compute the distance from each patient to p, add a new column to the table with those distances, and then sort the table by distance and take the first $k$ rows. That leads to the following Python code:

def closest(training, p, k):

...

def majority(topkclasses):

...

def classify(training, p, k):

kclosest = closest(training, p, k)

kclosest.classes = kclosest.select('Class')

return majority(kclosest)

def computetablewithdists(training, p):

dists = np.zeros(training.num_rows)

attributes = training.drop('Class').rows

for i in range(training.num_rows):

dists[i] = distance(attributes[i], p)

withdists = training.copy()

withdists.append_column('Distance', dists)

return withdists

def closest(training, p, k):

withdists = computetablewithdists(training, p)

sortedbydist = withdists.sort('Distance')

topk = sortedbydist.take(range(k))

return topk

def majority(topkclasses):

if topkclasses.where('Class', 1).num_rows > topkclasses.where('Class', 0).num_rows:

return 1

else:

return 0

def classify(training, p, k):

closestk = closest(training, p, k)